How We Deliver B200 Performance at $2/Hour

GPU infrastructure has always been sold as if performance and price are opposing forces. packet.ai removes that false trade-off.

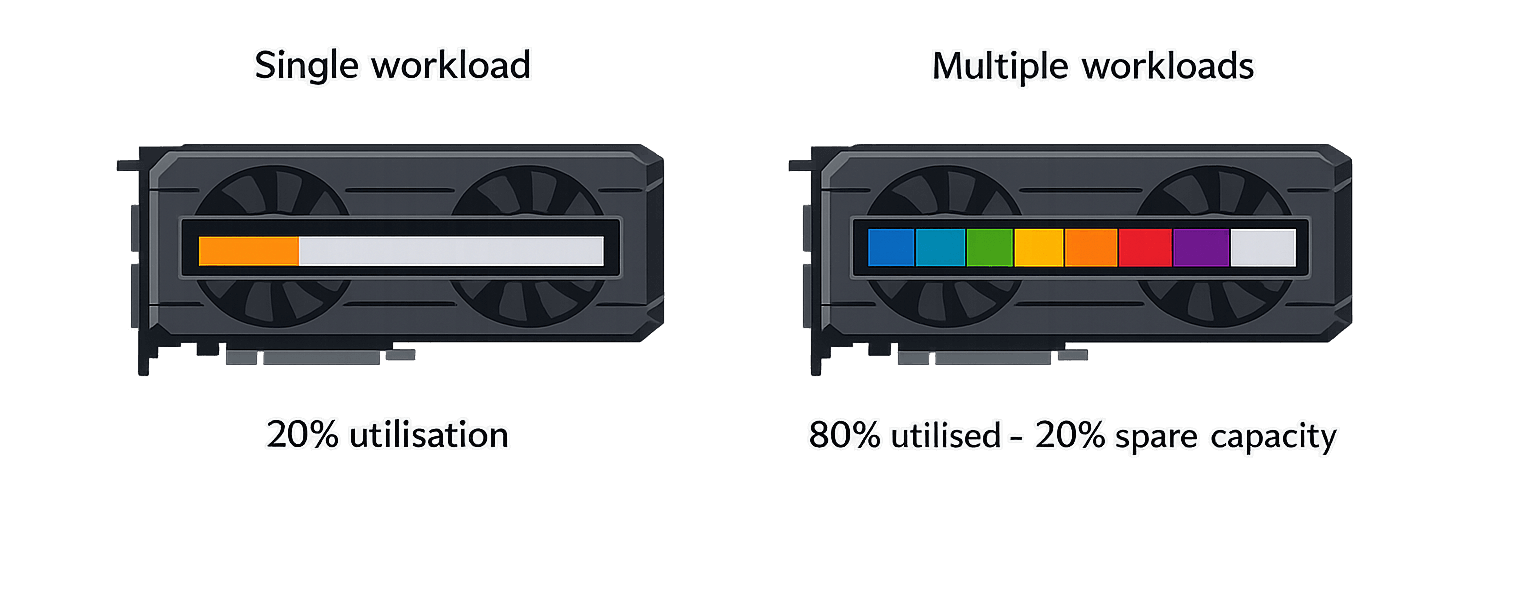

You either buy or rent dedicated GPUs and accept that large parts of the hardware will sit idle most of the time—or you oversubscribe aggressively and accept unpredictable performance, noisy neighbours, and brittle workloads.

Our GPU utilisation technology is designed around a simple observation: modern AI workloads rarely consume all aspects of a GPU at the same time. VRAM, compute, memory bandwidth, and interconnects are stressed differently depending on whether you're doing inference, fine-tuning, evaluation, or burst training.

Traditional infrastructure ignores this reality and prices GPUs as if they are a single, indivisible resource. They're not.

The Core Idea

Instead of treating a GPU as “one job, one card”, packet.ai allocates and schedules GPU resources based on what workloads actually consume in real time.

We track and manage GPU usage across multiple dimensions—not just whether a GPU is occupied, but how it is being used. This allows multiple compatible workloads to share the same physical hardware safely and predictably, without slicing the GPU into hard partitions or degrading performance.

The result is significantly higher utilisation of each GPU, while maintaining performance characteristics that feel close to dedicated infrastructure from the user's perspective.

How This Differs from Slicing and Oversubscription

A lot of platforms claim “sharing”, but what they usually mean is one of two things:

Hard Slicing (MIG, vGPU)

Technologies like MIG or vGPU carve a GPU into fixed partitions. This is simple, but inflexible. If your workload needs more VRAM but less compute, or vice versa, you're stuck paying for a shape that doesn't really fit.

Oversubscription

Multiple jobs are thrown onto the same GPU and hope for the best. This can look affordable on paper, but it creates unpredictable latency, throttling, and failure modes that are unacceptable for anything beyond experimentation.

packet.ai: Dynamic Placement

We dynamically place workloads based on live resource availability and workload profiles, enforcing isolation and fairness at the scheduler level rather than through rigid hardware partitions.

Why Performance Stays High

Performance degradation usually happens when workloads compete for the same bottleneck at the same time.

Our scheduler is built to avoid exactly that. By understanding how different workloads stress the GPU, we can co-locate jobs that complement each other rather than collide. For example, a memory-heavy inference workload can run alongside a compute-heavy task without either seeing meaningful slowdown.

When contention would impact performance, workloads are moved or queued automatically. The system prioritises predictable execution over raw density.

From the user's point of view, this feels like running on a well-behaved, dedicated GPU—not a noisy shared environment.

Why Pricing Becomes Dramatically Better

Once you can safely drive higher utilisation, the economics change completely.

Traditional GPU pricing assumes low average utilisation, so prices have to cover idle time. packet.ai removes much of that waste. The same physical GPU can do more useful work per hour, which means the cost of that GPU can be spread across more customers without degrading their experience.

That's why packet.ai consistently delivers a better performance-to-price ratio than both hyperscale cloud GPUs and typical “budget” oversubscribed offerings.

You're not paying for idle silicon. You're paying for the resources your workload actually uses.

The Win for Everyone

For Customers

Access to high-end GPUs with pricing that makes sense beyond short experiments. Predictable performance, fast startup times, and the ability to scale without being forced into long commitments or inflated hourly rates.

Workloads that would be uneconomical on dedicated GPUs suddenly become viable to run continuously.

For Infrastructure Providers

Higher utilisation means better returns on extremely expensive hardware. GPUs that would normally sit partially idle can now be monetised efficiently, without turning the platform into a support nightmare.

Providers can offer competitive pricing while protecting margins, because the underlying economics finally work.

Best of All Worlds

That's what creates the true win-win. Customers get more compute for their money. Providers get healthier economics. And GPUs finally spend their time doing what they were bought for—useful work, not sitting idle.

Ready to experience the difference?

Launch a B200 in minutes and see B200-class performance at $2/hour.